From Content Factory to Content Engine: Why Workflow Architecture Beats Tool Count in 2026

94% of marketers use AI, but only 23% have integrated workflows. Learn the 4-level maturity model and why systematic architecture outperforms tool collecting.

Antislop Team

AntiSlop

Here's a paradox that should unsettle every marketing leader: 94% of marketers plan to use AI for content creation in 2026, yet only 23.3% of companies have AI agents fully integrated into their marketing stack in production.

The rest? They're using AI in silos—disconnected tools that don't share context, maintain brand voice, or compound in value. They're stuck in what industry analysts call "Level 1" maturity: ad-hoc AI usage that produces inconsistent results and wastes more time than it saves.

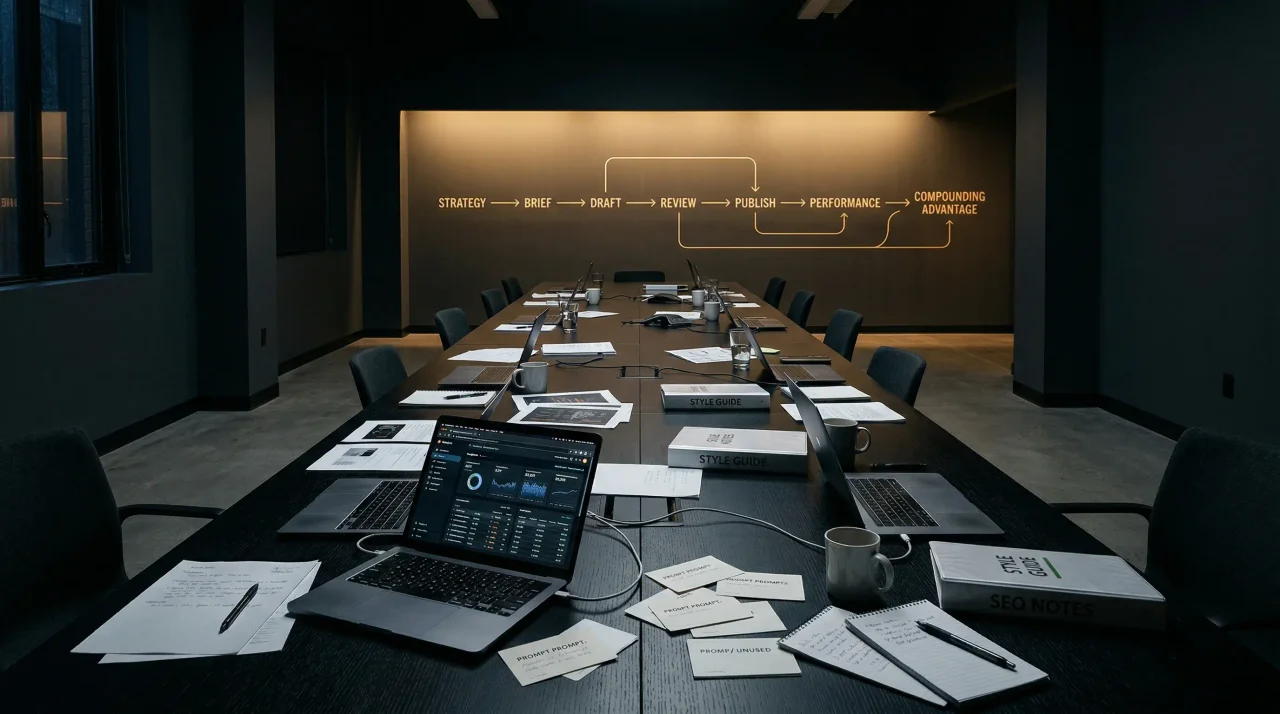

If your team has ChatGPT Plus, Jasper, Copy.ai, and three other AI writing tools in your stack, you're not ahead. You're probably falling behind. The competitive advantage in 2026 isn't having the most AI tools—it's having a systematic workflow architecture that turns those tools into a compounding content engine. If you want the clearest before-and-after framing for operators making that jump, read From AI Writing Tools to Content Agents. And if you need the concrete operating layer beneath that strategy, Content Marketing Automation Workflows That Actually Work in 2026 maps the triggers, handoffs, and publish loops.

The Tool Collection Trap

Most marketing teams approached AI adoption the same way they approached martech for the past decade: buy tools, hope for productivity gains, deal with integration headaches later. That playbook is breaking for three reasons.

First, AI tools compound differently than traditional software. A CRM or email platform delivers consistent value whether you use it alone or as part of a stack. But AI writing tools actually lose value when used in isolation. Each tool starts from zero context every session. Your brand voice, past performance data, editorial standards—none of it persists. The result is voice drift, repetitive research, and content that feels disconnected from everything else you've published.

Second, workflow fragmentation is now the primary bottleneck. According to March 2026 research from Martech.org, teams that scaled automation quickly by building entirely new workflows for every campaign now face a brittleness crisis. Launching a new campaign introduces unpredictable interactions with dozens of existing automations—each with slightly different data normalization logic—and no one is fully sure how a new workflow will interact with everything already running.

Third, the performance gap between workflow maturity levels is structural, not incremental. Teams operating at Level 3 maturity produce 5-10x more content at 75-85% lower cost per article, with compound organic growth that Level 1 teams mathematically cannot replicate.

The data from Averi's 2026 AI Content Marketing Benchmarks Report makes this concrete:

- Companies publishing 16+ posts monthly generate 3.5x more inbound traffic than those publishing 0-4 times per month

- Purpose-built content engines reduce effective cost per article by 85-95% compared to freelance/agency models

- A team producing 3 articles per week through a content engine workflow invests approximately 5 hours total—compared to 25-36 hours through traditional manual processes

The question isn't whether to use AI. It's whether your AI workflow is producing results or just producing content.

If you only have 3 minutes, use this workflow architecture diagnostic

| If this sounds like your team... | You are probably stuck at... | Fix first | | --- | --- | --- | | "We keep adding AI tools, but every draft still needs a human to re-explain the brief." | Level 1 — Ad hoc AI usage | Capture brand context once and reuse it across every brief | | "Our tools connect, but one person still has to manually push work between research, drafting, SEO, and CMS." | Level 2 — Integrated tools | Eliminate the highest-friction handoff before buying another app | | "Draft quality is fine. The real slowdown is approvals, formatting, and channel rewrites." | Late Level 2 / early Level 3 | Build workflow rules for QA, publishing, and channel-specific reuse | | "We publish consistently, but the system still depends on a few humans remembering everything." | Level 3 — Content engine | Turn tribal knowledge into documented governance and persistent context |

If your pain is still mostly blank-page anxiety, you do not need a more complex architecture yet. But if your real drag is coordination, context resets, and post-draft cleanup, workflow design matters more than tool count.

Fast read: if your team keeps adding AI tools but still rewrites every draft, re-briefs the system every week, and manually pushes content into the CMS, you do not have a tooling problem. You have a workflow architecture problem.

That starts earlier than most teams think. If the inputs are vague, the workflow just scales bad drafts faster. Our guide to content briefs for AI writers explains how to tighten the brief layer before you automate the rest. And if you're still evaluating whether an AI copywriting tool will actually reduce cleanup debt, use the decision framework in AI Copywriting Tool Buyer's Guide for 2026 before adding another app to the stack.

The 4 Levels of AI Content Maturity

Based on current industry benchmarks, AI content operations fall into four distinct maturity levels. Knowing where you stand is the first step toward improvement.

Level 1: Ad Hoc AI Usage

Characteristics: Using ChatGPT or similar tools for one-off tasks. No persistent brand context. No content strategy architecture. No optimization framework. No compounding.

The experience: You open an AI tool, write a detailed prompt, get a draft that needs heavy editing, rewrite most of it anyway, and repeat this process for every piece of content. Each session starts from zero. Brand voice is maintained through memory and manual oversight—both of which fail at scale.

The results: Inconsistent quality, occasional wins, no systematic growth. This is where approximately 50% of marketing teams operate in 2026.

Level 2: Integrated AI Tools

Characteristics: Multiple AI tools connected through manual workflows. SEO platform + AI writing tool + CMS + analytics, operated by a human who serves as the integration layer. Brand voice maintained through style guides that are inconsistently applied.

The experience: You've connected your tools with Zapier or native integrations. Content moves between systems, but someone still needs to manually brief the AI, check brand alignment, optimize for search, and handle publishing. The workflow works, but it's fragile and high-overhead.

The results: Measurable improvement over manual processes, but high time overhead and brand voice drift at scale. Approximately 30% of teams operate here.

Level 3: AI Content Engine

Characteristics: Purpose-built platform with persistent brand context, strategic architecture, AI drafting with multi-dimensional scoring, native CMS publishing, and analytics feedback loops. The system compounds—every output makes the next one better.

The experience: Your brand voice, positioning, ICPs, and competitive landscape are learned once and applied to every output. Topic recommendations arrive proactively based on keyword data, competitor gaps, and strategy map whitespace. Drafts arrive pre-optimized with question-based headings, answer blocks for AI citation, sourced statistics, and internal links. Publishing happens natively. Analytics inform future recommendations.

The results: Compound organic growth, consistent quality at velocity, AI citation capture. This is where approximately 15% of teams operate—and where the real performance gap opens.

Level 4: Autonomous Content Operations

Characteristics: AI agents that proactively create, optimize, publish, and iterate with minimal human oversight. Humans provide strategic direction and editorial judgment. Systems self-improve based on performance data.

The experience: Agents monitor performance data, identify content gaps, generate briefs, produce drafts, and optimize existing content—all within boundaries you've defined. Humans focus on strategic decisions, creative direction, and high-stakes editorial calls.

The results: Exponential use for lean teams. Only about 5% of teams operate here—mostly early adopters with strong data infrastructure.

Why Workflow Architecture Determines Success

The transition from Level 1 to Level 3 isn't about buying better tools. It's about designing a workflow architecture that solves four specific problems.

Problem 1: The Context Reset

Every time you open a general-purpose AI tool, you start from zero. It doesn't remember your brand voice, your past content performance, your competitive positioning, or your editorial standards. You're re-briefing the AI every single session.

The architectural solution: Persistent brand context that learns once and applies everywhere. Your brand voice, positioning framework, ICP definitions, and competitive differentiation are captured in a structured format that the AI references for every output. No more re-briefing. No more voice drift. For a concrete example of what this looks like inside a client-services business, see how content agencies scale without hiring once voice capture becomes part of the operating model.

If your team is an agency rather than an in-house content team, that voice-capture layer is the difference between adding headcount and actually improving throughput. The agency version of the same problem is not usually "we need more writers." It is "we have no reusable model of how each client thinks." That's why content agencies scale without hiring is the right companion piece for operators trying to fix approval drag instead of just draft speed.

Problem 2: The Strategy Gap

Most AI content production is reactive: "We need a blog post about X." There's no strategic architecture connecting individual pieces to broader topical authority, keyword clusters, or buyer journey stages. Content is produced in isolation, missing the compounding effect of interconnected coverage.

The architectural solution: A strategy map that defines pillar topics, content clusters, and the relationship between pieces. The AI doesn't just write—it knows where each piece fits in the broader content ecosystem and optimizes for internal linking, topical coverage, and journey alignment.

Problem 3: The Quality Control Bottleneck

AI can produce content fast, but someone still needs to check it for brand alignment, factual accuracy, SEO optimization, and editorial quality. When that someone is a senior team member reviewing every draft, you've just moved the bottleneck from writing to editing.

The architectural solution: Multi-dimensional scoring built into the drafting process. Content is scored in real-time across SEO (40%), AEO (25%), and GEO (35%) dimensions—checking for question-based headings, answer block structure, sourced statistics, internal linking, and AI citation optimization before human review.

Problem 4: The Publishing Friction

Even perfect drafts face friction getting live: CMS formatting, image sourcing, metadata entry, scheduling, distribution. Each step introduces delay and error potential.

The architectural solution: Native publishing that handles formatting, optimization, and scheduling automatically. Drafts flow directly to your CMS with proper structure, optimized metadata, and scheduled publication—no manual copy-paste, no formatting fixes.

The Governance Factor

Here's something that surprises most teams: governance isn't a brake on content velocity—it's an accelerator.

When governance is explicit, teams move faster without sacrificing trust. Clear boundaries reduce backtracking and approval bottlenecks. Everyone knows what's allowed, what's required, and who signs off on what.

According to Prose Media's 2026 AI marketing framework, practical governance includes:

- Approved tools (preventing shadow IT and data leakage)

- Allowed and prohibited data (protecting customer privacy and competitive intelligence)

- Review rules by workflow (automated checks for low-risk content, human oversight for high-stakes pieces)

- Brand and editorial QA (consistent standards without micromanagement)

- Disclosure rules where relevant (transparency for AI-assisted content)

- Documentation for prompts and automations (preventing knowledge loss when team members change)

- Rollback steps when something goes sideways (confidence to move fast knowing you can recover)

Teams with lightweight but mandatory governance report significantly fewer errors, faster publication cycles, and higher confidence in content quality. The goal isn't bureaucratic control—it's faster, more confident publishing.

What Actually Differentiates Content in 2026

In March 2026, Ahrefs published a direct assessment: "AI Content Wasn't Good Enough. Now It Is."

Their analysis found that AI content has reached a quality level where the gap with skilled human writers has narrowed to the point where it no longer functions as a reliable differentiator for rankings or reader experience by default.

This changes everything.

If "human-written" is no longer a competitive advantage, what is? The new differentiators:

Original data and research. AI can synthesize existing information brilliantly. It cannot conduct original surveys, run experiments, or gather proprietary data. Content based on first-party research—your own benchmarks, customer studies, product data—stands out in a sea of synthesized summaries.

Direct experience and expertise. AI can write credibly about topics it's been trained on. It cannot share personal experiences, lessons from failed experiments, or insights from years of specialized practice. Expertise that comes from doing the work, not reading about it, remains irreplaceable.

Nuance and judgment. AI struggles with contextual nuance, ethical gray areas, and strategic trade-offs. Human judgment about what to say, how to say it, and when to deviate from conventional wisdom creates content that resonates on a different level.

Editorial control and curation. The teams winning with AI content aren't the ones generating the most words—they're the ones with the strongest editorial filters. Knowing what to cut, what to emphasize, and how to structure an argument for maximum impact is still a human skill.

The takeaway: AI handles execution speed. Humans handle strategic direction, original research, and editorial judgment. The winning combination is systematic AI workflow + strong human oversight—not more AI tools without coordination.

The 90-Day Transition Plan

Moving from Level 1 to Level 3 doesn't happen overnight, but it doesn't require a year either. Here's a practical roadmap:

Days 1-30: Stabilize Workflow Quality

- Audit your current state: Map every AI tool, workflow, and handoff in your content process. Identify where context gets lost, where bottlenecks form, and where errors typically occur.

- Define your brand context: Document voice, positioning, ICPs, competitive differentiation, and editorial standards in a structured format that can be referenced systematically.

- Establish baseline metrics: Track current time-to-publish, cost per article, revision rate, and organic performance. You'll need these to measure improvement.

- Map review rules: Define what gets automated checks vs. human review. Not everything needs senior editor oversight—most content needs systematic quality gates, not human judgment at every step.

Days 31-60: Build the Engine

- Centralize brand context: Move from documents that humans read to structured data that AI can reference. This is the foundation everything else builds on.

- Standardize brief templates: Define input structures for different content types. Standardization reduces revision churn and makes AI output more predictable. If your team needs a working model instead of a blank document, start with our content briefs for AI writers template and adapt it by format.

- Implement systematic QA: Build quality checks into the workflow—SEO optimization, brand voice alignment, factual verification—rather than treating them as final review steps.

- Connect publishing: Eliminate manual handoffs between drafting and publication. The goal is smooth flow from approved draft to live content.

Days 61-90: Measure and Optimize

- Compare against baseline: Did time-to-publish drop? Did revision rates fall? Is organic performance improving? Quantify the gains.

- Expand what works: Double down on workflows that improved speed, quality, or performance. Kill the ones that didn't.

- Capture learnings: Document what worked, what didn't, and how the workflow should evolve. This becomes your playbook for scaling further.

- Decide what stays internal: Some content needs deep strategic thinking and original research—keep that in-house. Other content is execution-heavy—automate it aggressively.

The Real Risk: Analysis Paralysis

There's a trap that's catching teams as they evaluate AI content solutions: waiting for the perfect tool instead of improving the workflow they have.

The vendors will tell you their platform solves everything. The consultants will tell you that you need a 6-month planning phase. The competitors are publishing while you're still evaluating.

The reality: you can move from Level 1 to Level 2 with better documentation and process discipline. You can reach Level 3 with purpose-built tools that fit your existing stack. You don't need to rebuild everything. You need to solve the specific problems that are slowing you down.

A practical rule for picking the first fix

- If drafts are weak before editing begins, fix the brief + context layer first.

- If drafts are decent but cleanup takes forever, fix the QA + review rules first.

- If approved work still sits in docs for days, fix the publishing handoff first.

- If one idea keeps being rewritten manually for every channel, fix the repurposing architecture first.

Start with the biggest bottleneck. If it's briefing, standardize your brief templates. If it's quality control, implement systematic scoring. If it's publishing friction, connect your drafting tool to your CMS. Small improvements compound faster than perfect plans that never launch. If you are still stuck at the vendor-comparison stage, our best AI content writers in 2026 breakdown is the practical shortcut for deciding which tool actually fits your workflow, and from AI writing tools to content agents explains when the real fix is orchestration rather than a better draft generator.

The Bottom Line

The teams winning with AI content in 2026 aren't the ones using the most tools. They're the ones with the clearest workflow design, the best judgment about where humans still matter, and the discipline to turn AI from novelty into operating use.

The maturity model is clear. The benchmarks are published. The gap between Level 1 and Level 3 is widening every quarter. The only question is whether you'll close it—or watch competitors do it first.

Your AI tools are only as good as the workflow architecture that connects them. Build the engine, not just the factory.

Related Articles

The LinkedIn Post Generator Problem Is Voice DNA

Most LinkedIn post generators copy surface tone. The posts that still travel have structure, proof, and a recognizable line of thought.

Pass AI Detection Without Sounding Generic

Passing AI detection is not a synonym game. It starts with evidence, point of view, and a draft that carries a writer’s reasoning pattern.

How to Humanize AI Content: An Editor's Checklist That Actually Works

AI detectors flag 61% of human-written non-native English as AI. Here's a practical editing framework to make AI content sound human.

Ready to kill the slop?

AntiSlop learns your voice and creates content that sounds unmistakably you.

Try AntiSlop free